“OneStream Architecture is not about how many cubes you build — it’s about how intentionally you design data units, extensibility, and aggregation boundaries to support consolidation, planning, and analysis without collapsing performance.”

I take a firm position: most architectural failures in OneStream are not functional gaps — they are cube design decisions made without understanding data unit size, extensibility, and dynamic behavior trade-offs. The architecture decision between monolithic, linked, exclusive, hybrid, or dynamic cubes directly determines whether close cycles accelerate or degrade over time.

Below is how I approach it — and why.

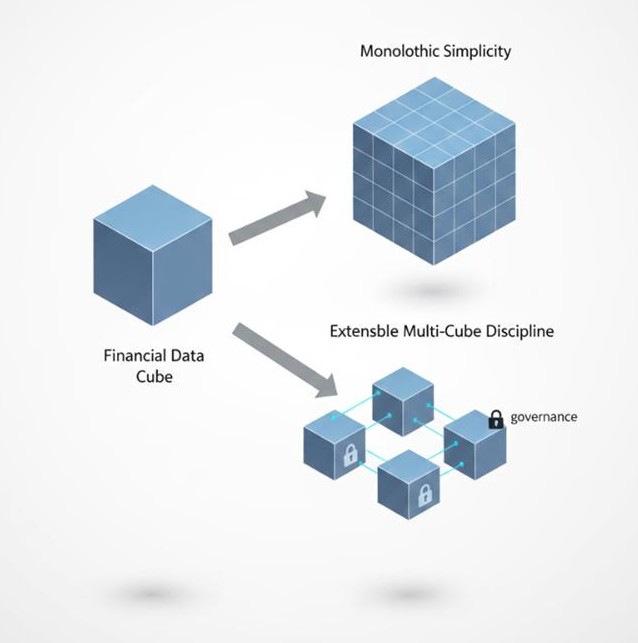

The single-cube design is appealing. One workflow structure. One consolidation path. One set of dimensions. Clean governance.

In statutory consolidation scenarios with controlled dimensionality and predictable data unit sizes (~250k records at upper bound), this works extremely well. Consolidations complete in minutes, cube views render predictably, and calculation status remains manageable.

But the tension appears when:

Once data units approach large sparse thresholds (~750k+ records), performance shifts from metadata-driven efficiency to CPU-driven stress.

Consolidation time increases not because OneStream is slow — but because the architecture ignored data unit physics.

My conclusion:

A monolithic cube is excellent for tightly governed statutory consolidation. It becomes fragile when used as an analytics warehouse.

Extensible Dimensionality is one of the most powerful features in OneStream architecture. It allows corporate to maintain a standard dimension while business units extend locally for management reporting.

This is critical in real-world environments:

In consolidation and planning use cases, this gives structural flexibility without surrendering governance.

However — extensibility does not reduce data unit size. It controls hierarchy behavior and dimensional inheritance. Performance still depends on:

I have seen teams assume extensibility is a scaling mechanism. It isn’t. It is a modeling discipline mechanism.

Use extensibility to protect the model.

Do not rely on it to fix performance.

When transactional dimensions (SKU, Project, Employee) enter the model, standard cube assumptions break.

Sparse dimensions increase:

Placing a UD#Top member in a report forces runtime aggregation across potentially thousands of children. That is not a reporting issue — that is an architectural decision manifesting at query time.

In planning and forecasting environments, especially with rolling forecasts, this becomes exponential.

My architectural rule:

If transactional detail is required for analytics but not required for consolidation logic, isolate it.

Do not let analytical density contaminate consolidation data units.

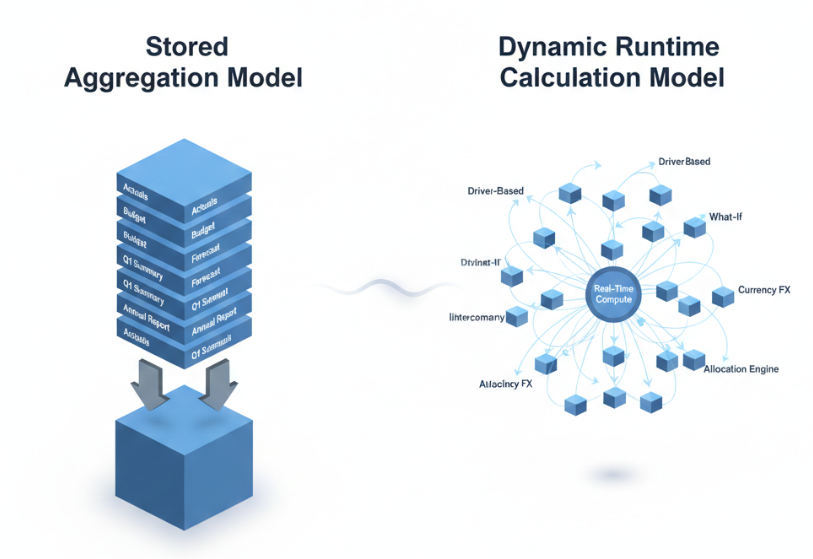

Hybrid cube design intentionally avoids storing parent-level aggregation. Parent values are dynamically calculated using API-based entity aggregation.

This is ideal when:

In forecasting and scenario modeling use cases, hybrid designs can dramatically reduce storage footprint.

But here is the trade-off:

You are exchanging stored stability for runtime flexibility.

For analytics-driven FP&A environments, I favor hybrid.

For statutory close, I do not.

Dynamic Cube Services allow real-time data surfacing from external systems or other cubes without traditional workflow loading.

This is powerful for:

Dynamic dimensions eliminate stored metadata and source members from external systems. Data bindings control share vs. copy behavior. Workspace assemblies manage refresh cadence.

But dynamic architecture introduces complexity:

In consolidation environments, dynamic cubes must be used carefully. They can participate in consolidation sequences — but design mistakes propagate quickly.

Dynamic cubes are not “faster cubes.”

They are purpose-built surfaces for specific data patterns.

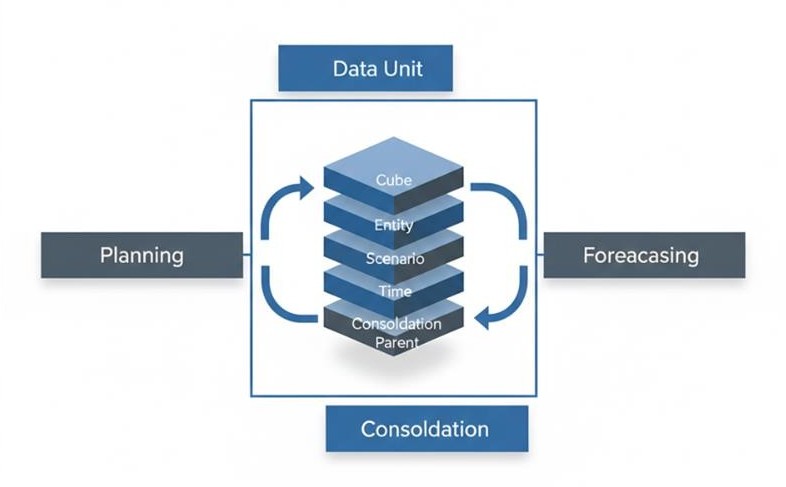

Every OneStream architecture decision ultimately revolves around the data unit:

Cube + Entity + Parent + Consolidation + Scenario + Time

If you misidentify the controlling dimension — typically Entity — you will:

Before choosing cube types, I ask:

Architecture is not about cube count.

It is about controlling dimensional explosion.

The most common failure pattern I see:

Trying to serve consolidation, operational analytics, driver-based planning, and scenario simulation inside a single architectural model.

Technically possible.

Architecturally unstable.

Over time:

The risk is not system failure — it is architectural entropy.

Once entropy sets in, even small enhancements create cascading recalculation effects.

If you want predictable close cycles, scalable forecasting, and controlled extensibility:

OneStream Architecture forces a decision: build for simplicity today or design for controlled scale tomorrow.

I advocate disciplined multi-cube architecture with intentional extensibility and targeted hybrid/dynamic usage.

Not because it is more complex — but because enterprise EPM environments inevitably grow.

And in OneStream, growth punishes architectural shortcuts.

Design the data unit correctly, and consolidation, planning, forecasting, and close will remain predictable.

Design it casually, and performance will become your governance problem.

Rajan Shah

Technical Manager

Rajan Shah is a Technical Manager with OneStream Expertise at Solution Analysts. He brings almost a decade of experience and a genuine passion for software development to his role. He’s a skilled problem solver with a keen eye for detail, his expertise spans in a diverse range of technologies including Ionic, Angular, Node.js, Flutter, and React Native, PHP, and iOS.

Tell us a bit about your needs and our team will reach out to discuss how we can help.

Prefer mail? info@solutionanalysts.com